The Merge of Cybersecurity and AI in Big Business: Why the Attack Surface Has Shifted

Artificial intelligence and cybersecurity are no longer separate disciplines—they’ve fused into a single ecosystem that defines modern enterprise risk. What used to be a predictable battlefield of malware, phishing, and misconfigurations has transformed into an unpredictable landscape where LLMs generate attacks, autonomous agents scan networks, AI-powered defenses react in milliseconds, and new vulnerabilities emerge inside the very models enterprises use to stay competitive.

The shift didn’t happen overnight. AI crept into workflows as a helper—writing emails, analyzing logs, automating tasks. But today, AI has become both the weapon and the target, and big businesses are realizing their traditional security frameworks can’t keep up. The attack surface has expanded beyond endpoints and cloud servers into models, data pipelines, APIs, prompts, plugins, and human decision-making itself.

This is the new frontier of enterprise cybersecurity—and it’s evolving faster than any previous security era.

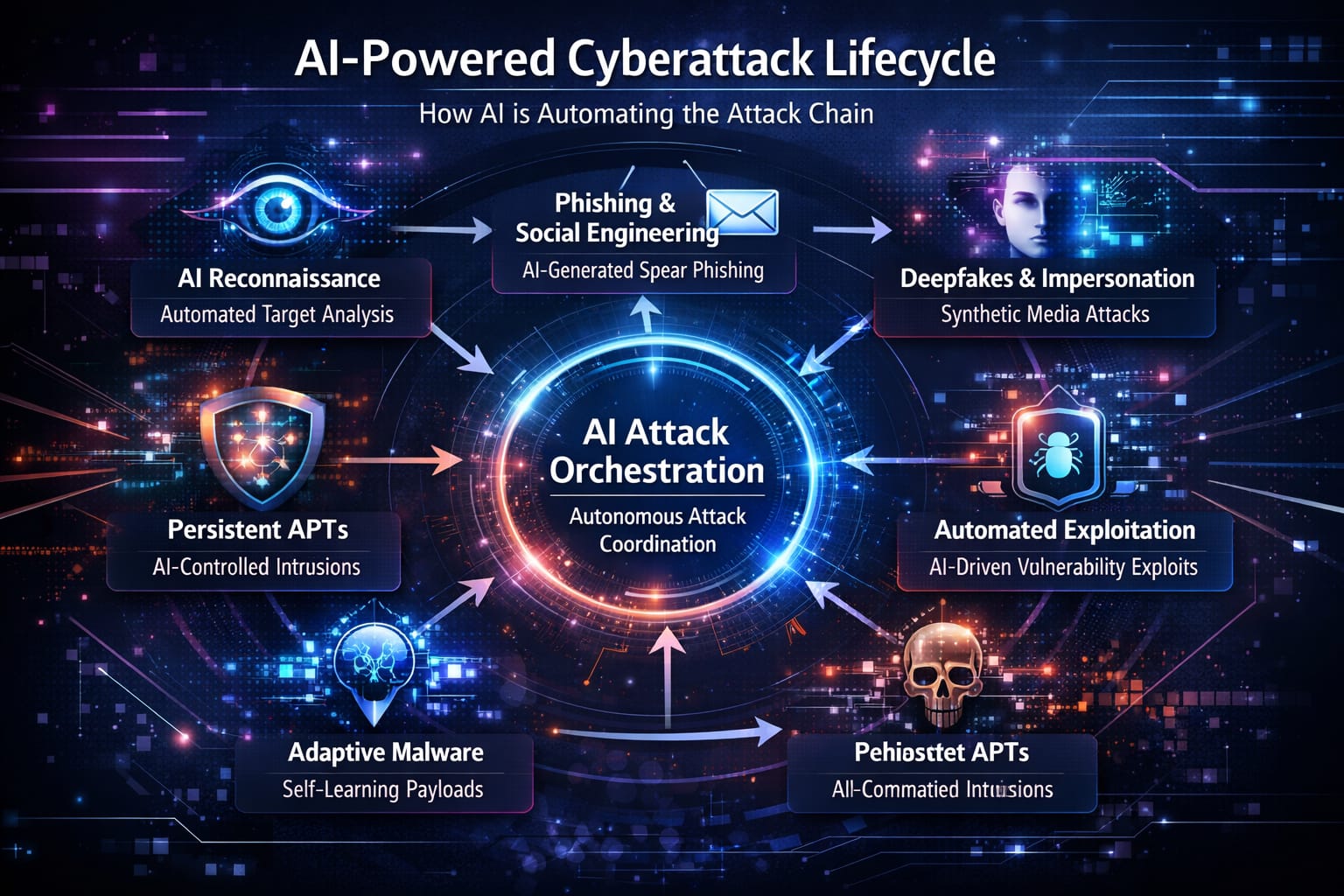

1. The Rise of AI-Powered Cyber Offense

1.1 Autonomous and Semi-Autonomous Attacks

Cyberattacks used to require time, skill, and coordination. Now, with AI agents capable of executing multi-step tasks, the entire economics of hacking has shifted. Malicious actors can use AI to:

- Map an organization’s network

- Identify misconfigurations

- Generate exploit code

- Draft phishing campaigns

- Create fraudulent documents or voice recordings

- Automate data exfiltration

This is no longer hypothetical technology. Attackers are already leveraging AI to run operations with minimal human intervention, turning what was once a difficult operation into something nearly effortless.

The major shift: scale.

One attacker with a powerful AI agent can behave like a team of 20.

1.2 LLM-Driven Social Engineering

As AI becomes conversational, spear-phishing becomes indistinguishable from legitimate communication. Models can learn a person’s tone, vocabulary, and writing habits from public data and replicate them perfectly.

This enables:

- Business email compromise with flawless grammar and context

- Impersonation of executives or partners

- Multi-turn phishing conversations that adapt to the victim

- Real-time manipulation during customer service chats

We’ve entered a world where phishing isn’t a generic blast—it’s a personalized script written for one individual, crafted by an AI that understands psychology as well as language.

1.3 Deepfakes and Influence Operations

Deepfake audio and video—once expensive and easily spotted—are now cheap, fast, and eerily realistic. Attackers can create:

- Fake voice messages from a CEO instructing urgent wire transfers

- Fraudulent “security alerts” recorded by a real-looking employee avatar

- Synthetic documents or screenshots used to “prove” false narratives

The modern attack surface includes not just networks and devices but perception itself.

Big businesses must now protect the integrity of their communications, brands, and public messaging.

2. How AI Is Transforming the Defender’s Playbook

2.1 AI in the Security Operations Center

Companies use AI to monitor billions of events per day—something no human team could handle. AI now:

- Flags anomalies in user behavior

- Groups related alerts

- Identifies lateral movement

- Suggests remediation steps

- Writes incident reports

- Calculates risk scores

AI makes the SOC faster and more efficient. But it also introduces a new dependency:

If your security AI is compromised, misconfigured, or manipulated, your entire defense system can be steered off course.

2.2 Automated Response

Endpoint protection tools can now isolate a device, kill malicious processes, and roll back changes automatically. This “self-healing” approach reduces dwell time dramatically.

But automated response raises questions:

- What if the AI isolates the wrong system?

- What if a prompt injection manipulates network behavior?

- What if a jailbreak causes the AI to leak sensitive context?

In the AI era, defenders must secure not just their systems, but the logic driving their systems.

2.3 Cloud and SaaS Security Powered by ML

As enterprises migrate to multi-cloud and SaaS, the complexity skyrockets. AI helps by:

- Identifying misconfigured storage buckets

- Mapping exposed data across cloud regions

- Understanding cross-SaaS permissions

- Spotting unusual API behavior

- Ensuring compliance across hundreds of services

Yet this introduces new risk: AI models processing sensitive data must be protected from leaking, poisoning, or being reverse-engineered.

3. The New Attack Surface: Why Everything Has Shifted

3.1 AI Models as Assets — and Liabilities

Every enterprise using AI is now protecting:

- Proprietary models

- Fine-tuned weights

- Embeddings

- Training data

- Vector databases

- Internal knowledge bases

These are high-value targets. A stolen model can reveal:

- Intellectual property

- Business logic

- Sensitive internal data

- Strategic decision-making patterns

Models can also output sensitive data accidentally if prompts or training data weren’t properly sanitized.

3.2 Prompt Injection and Model Manipulation

Unlike traditional software, which follows deterministic logic, AI models can be tricked, steered, or overridden through crafted inputs.

Prompt injection can cause:

- Leakage of internal corporate information

- Bypassing of safety protocols

- Execution of unauthorized actions through plugins

- Manipulation of summaries, recommendations, or reports

- Incorrect risk assessments or false negatives in security alerts

This is the AI equivalent of SQL injection—except the attack vector can appear inside emails, documents, websites, or even a PDF an employee opens.

3.3 Data Pipelines as a Soft Underbelly

AI needs data—lots of it. That means enterprises often aggregate:

- Customer records

- Logs

- Chat transcripts

- Sales interactions

- Support tickets

- Legal documents

- Financial reports

This creates several risks:

- Sensitive data being used in prompts

- Shadow datasets not governed by compliance

- Model retraining on untrusted inputs

- Data poisoning through manipulated logs

- Accidental mixing of confidential and public data

The traditional attack surface was a system.

The new attack surface is the entire flow of data into and out of models.

3.4 Shadow AI in Every Department

Employees freely experiment with AI tools:

- Unapproved chatbots

- Free browser-based AI utilities

- SaaS tools with unknown retention policies

- Plugins connected to production data

- AI assistants handling sensitive tasks

Security teams cannot protect what they cannot see.

In many companies, “shadow AI” is bigger than official AI.

3.5 The Human-AI Hybrid Workforce

As employees rely more on AI, attackers exploit new vulnerabilities:

- Over-trust in AI summaries

- Manipulation of AI-generated instructions

- Employees pasting sensitive data into chatbots

- AI hallucinations treated as fact

- AI-created “safe” links or files that contain hidden payloads

AI changes human behavior—and attackers target those behavioral shifts.

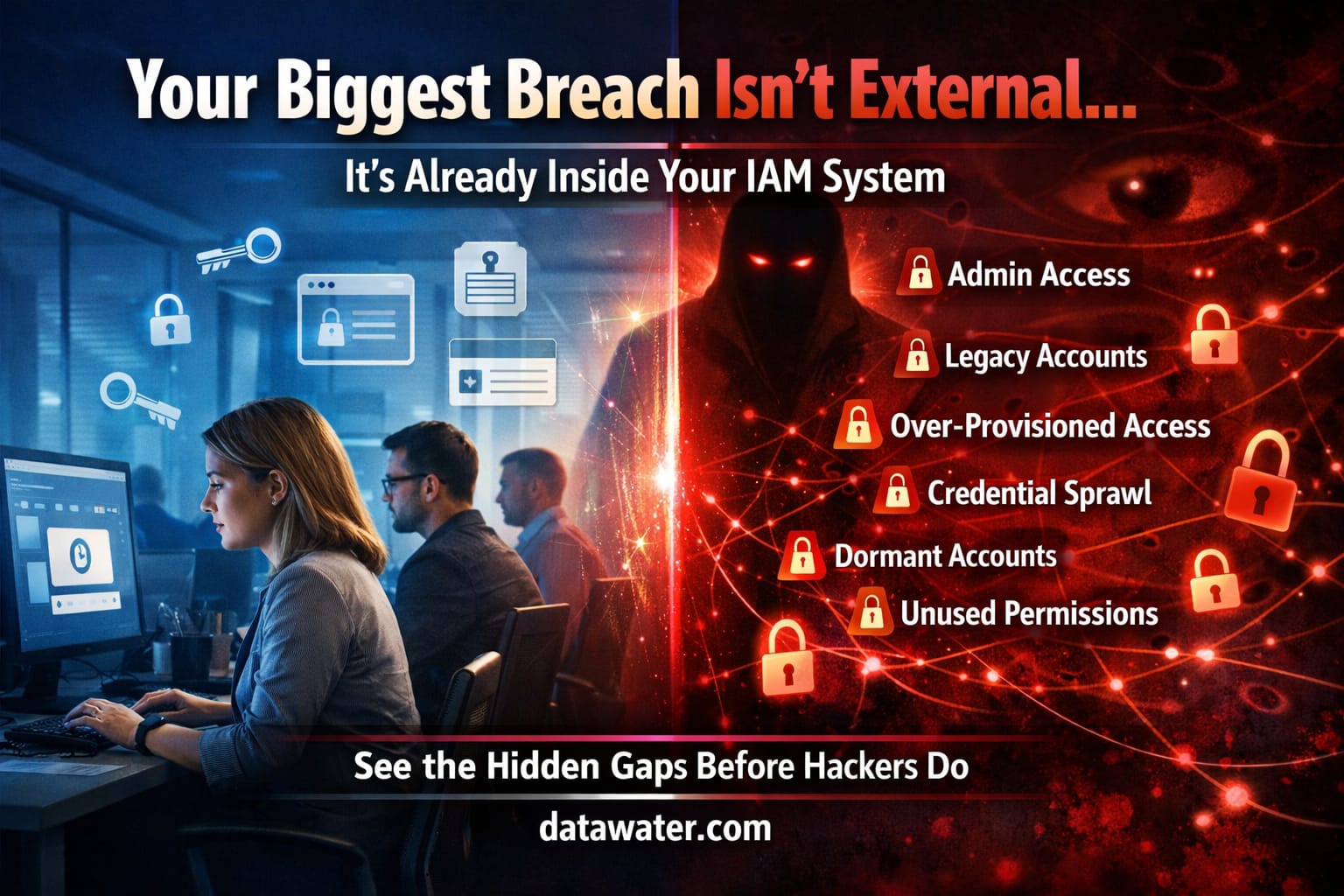

4. The Governance Gap: Where Big Businesses Are Still Exposed

Even companies with strong cybersecurity programs face new AI-specific gaps:

4.1 Fragmented Ownership

Security teams oversee firewalls, networks, and EDR.

Data science teams run models, training pipelines, and datasets.

Product teams deploy AI-driven features.

Who owns:

- prompt safety?

- training data governance?

- model access controls?

- plugin permissions?

- agent autonomy limits?

Without a unified framework, AI security falls into cracks.

4.2 Outdated Risk Management

Classic frameworks weren’t built for AI systems that:

- generate unpredictable outputs

- store context temporarily

- learn from user interactions

- integrate with external tools

- act on behalf of humans

AI requires a new category of risk thinking.

4.3 Lack of AI Red Teaming

Most companies test their networks—but not their models.

AI red teaming should include:

- prompt injection

- jailbreak attempts

- poisoning attacks

- model extraction

- manipulation of agent behavior

- deepfake-enabled social engineering

Defending AI requires testing AI like an adversary would.

5. What Big Businesses Must Do Next

5.1 Treat AI Systems as Critical Infrastructure

Enterprises must catalog and secure:

- every model

- every fine-tuned version

- every dataset

- every API connection

- every plugin

- every internal agent

If it processes sensitive data or makes decisions, it’s an asset worth protecting.

5.2 Secure Data Pipelines and Model Inputs

This includes:

- strict data classification

- guardrails preventing sensitive data in prompts

- filtering and sanitization layers

- strict access controls for datasets

- automated scanning for poisoned data

Your model is only as safe as the data flowing into it.

5.3 Implement AI Security Posture Management

Companies now need continuous monitoring for:

- model misconfigurations

- plugin permissions

- API access patterns

- shadow AI usage

- sensitive data exposure

- anomalous agent behavior

This is the AI equivalent of cloud security posture management—just for models and workflows.

5.4 Build AI-Literate Security Teams

Security engineers must understand:

- LLM behavior

- agent frameworks

- vector databases

- training pipelines

- prompt vulnerabilities

And data scientists must learn:

- secure coding

- identity and access control

- cloud security

- threat modeling

Cybersecurity and AI teams must become one integrated function.

5.5 Prepare Leadership for AI-Driven Crisis Scenarios

Boards and executives need playbooks for:

- AI-assisted ransomware

- deepfake corporate fraud

- model leaks

- agent malfunction

- compromised AI chat systems

- AI-generated misinformation targeting customers or investors

AI risk is now a business risk—not just a technical one.

6. The Bottom Line: AI Is Both the Shield and the Surface

Modern enterprises face a dual reality:

- Attackers use AI to scale and personalize cyberattacks like never before.

- Defenders use AI to detect, respond, and automate security at unprecedented speed.

- AI itself—its models, data, pipelines, and plugins—has become the new attack surface.

The merge of cybersecurity and AI means organizations must rethink security from the ground up.

The companies that thrive will be those that:

- Govern AI with intention

- Protect the data feeding their models

- Monitor every layer of their AI stack

- Red-team their AI systems as aggressively as attackers

- Train their people to understand AI-driven risks

- Treat AI as part of core cybersecurity—not a separate project

The future of enterprise security is not security plus AI.

It is security built around AI, for a world where the threat landscape grows smarter every day.