The AI Attack Surface Has Shifted — And Most Enterprises Aren’t Ready

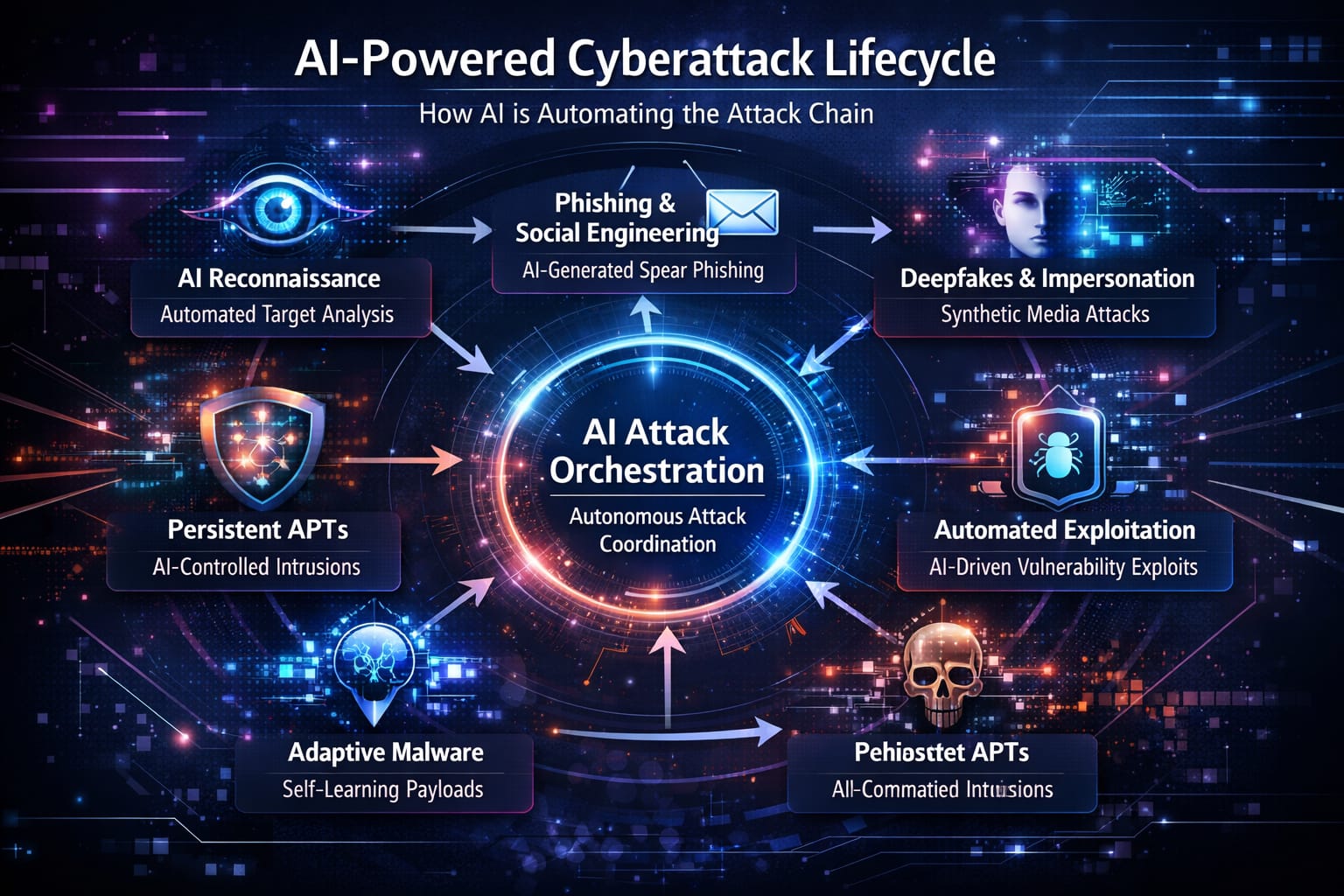

Artificial intelligence is no longer just another tool in the attacker’s kit — it has become the core driver of modern cyberattacks. Over the last year, state-sponsored groups, cybercriminals, and automated adversaries have demonstrated something shocking: AI can now automate the overwhelming majority of a sophisticated attack chain, from reconnaissance to exploitation to exfiltration.

This marks a turning point. The attack surface has moved. It’s no longer confined to endpoints, networks, identities, or cloud consoles. The AI era introduces a new security frontier — one that most enterprises are dangerously unprepared to defend.

Below is a deep dive into how the AI attack surface has evolved, what new risks security teams must address, and the roadmap enterprises need to secure the age of intelligent threats.

From Firewalls to Prompts: The New AI Attack Surface

For decades, cybersecurity was built around well-defined perimeters:

- Networks

- Endpoints

- Applications

- Cloud and identity

AI shatters these boundaries in three ways:

1. AI Is Now a Primary Attack Vector

Attackers are using large language models (LLMs) to:

- Generate phishing campaigns tailored to individuals

- Rewrite or refactor malware

- Discover vulnerabilities at scale

- Build sophisticated social-engineering scripts

- Automate tasks that previously required skilled operators

The difference today is speed and volume. A single attacker can now generate thousands of unique payloads, phishing emails, or exploits within minutes. AI removes the bottleneck of human time and human learning — dramatically expanding the threat landscape.

2. AI Expands Your Internal Attack Surface

Every enterprise is plugging AI tools into its systems:

- Slack copilots

- CRM assistants

- AI customer service agents

- RAG systems connected to internal knowledge bases

- LLMs inside ticketing and DevOps pipelines

- Developer copilots integrated with code repos

When an AI system can read or write data, or trigger actions, it becomes part of your operational fabric — and therefore part of your attack surface.

LLMs with access to internal tools can unintentionally:

- Modify data

- Leak sensitive information

- Execute actions due to manipulated prompts

- Access documents they were never meant to see

AI doesn’t just introduce risk — it amplifies it.

3. AI Creates an Entirely New “Semantic Layer”

Traditional security protects infrastructure. AI introduces a new boundary: the semantic layer, the world of prompts, embeddings, and natural-language instructions.

This layer can be manipulated. It is dynamic, context-driven, and often invisible to traditional tools.

If your security stack can’t monitor prompts, content flows, or agent behavior, it cannot truly protect your AI systems.

New AI-Native Threats That Most Enterprises Cannot See

AI brings a completely new category of vulnerabilities. These threats don’t look like SQL injections or ransomware — they exploit how models think, interpret, and act on information.

Here are the biggest AI-native threats every enterprise must address.

1. Prompt Injection & the Semantic Supply Chain

Prompt injection is the most common and most dangerous AI attack.

It works by embedding malicious instructions into content the model will read:

- Emails

- PDFs

- Ticket descriptions

- Webpages

- Images with hidden text

- Chat messages

Once an AI assistant reads compromised content, the attacker-controlled instructions override your intended behavior.

Why this matters:

If your LLM is integrated with tools — such as CRM, GitHub, cloud consoles, or automation systems — a prompt injection can trigger:

- Unauthorized code changes

- Leaked credentials

- Mass emails

- Changed security settings

- Deleted records

- Unauthorized access provisioning

This is the first major example of an attack vector targeting language instead of code.

2. Data Poisoning & Model Integrity Attacks

Any AI system trained or fine-tuned on external or user-generated data is susceptible to poisoning attacks.

Adversaries can intentionally plant bad data that:

- Inserts backdoor behaviors

- Corrupts the model’s accuracy

- Causes hallucinated outputs

- Degrades performance on specific tasks

- Steers the model toward attacker goals

As AI becomes more autonomous and more integrated with decision-making, the consequences of poisoned data become catastrophic.

3. AI Supply Chain Vulnerabilities

Enterprises rarely build AI systems from scratch — they integrate:

- Model APIs

- Open-source LLMs

- Vector databases

- Orchestration frameworks

- Plugins and agents

- Third-party copilots

- AI automation tools

Every one of these components is:

- A dependency

- A potential CVE

- A potential injection point

- A risk multiplier

Many LLM orchestration frameworks have already had documented vulnerabilities leading to prompt hijacking, data leakage, and privilege escalation.

If you’re not auditing your AI supply chain, you’re blind to one of the fastest-growing security risks in the enterprise.

4. Model Abuse, Jailbreaking & Autonomous Adversaries

Attackers are now jailbreaking AI systems to:

- Bypass safety controls

- Generate harmful content

- Assist in attack planning

- Locate vulnerabilities

- Build custom exploits

- Run full-scale autonomous campaigns

The line between “AI helper” and “AI attacker” is thinner than ever.

As commercial models become more capable, jailbreaking becomes more valuable — and more dangerous.

Why Most Enterprises Aren’t Ready

Even organizations with mature cybersecurity programs still struggle with AI security. The reasons fall into four key gaps:

1. Shadow AI Is Everywhere

Business units deploy AI tools faster than security can assess them:

- Marketing installs an AI writing tool

- Ops deploys an AI bot in Slack

- Finance experiments with GPT data analysis

- Product teams roll out AI copilots

Most of this happens without security approval or visibility.

Shadow AI is the new shadow IT — but far more dangerous.

2. Traditional Security Tools Can’t See AI Behavior

Security controls built for the cloud era cannot detect:

- Malicious prompts

- Covert instruction injection

- Unexpected agent behavior

- Hallucinated API calls

- Sensitive data leaking through model outputs

- Automated actions triggered by manipulated content

Firewalls don’t read context windows.

DLP tools don’t scan prompts.

SIEMs don’t track LLM tool usage.

The entire AI interface layer sits outside the visibility of most security programs.

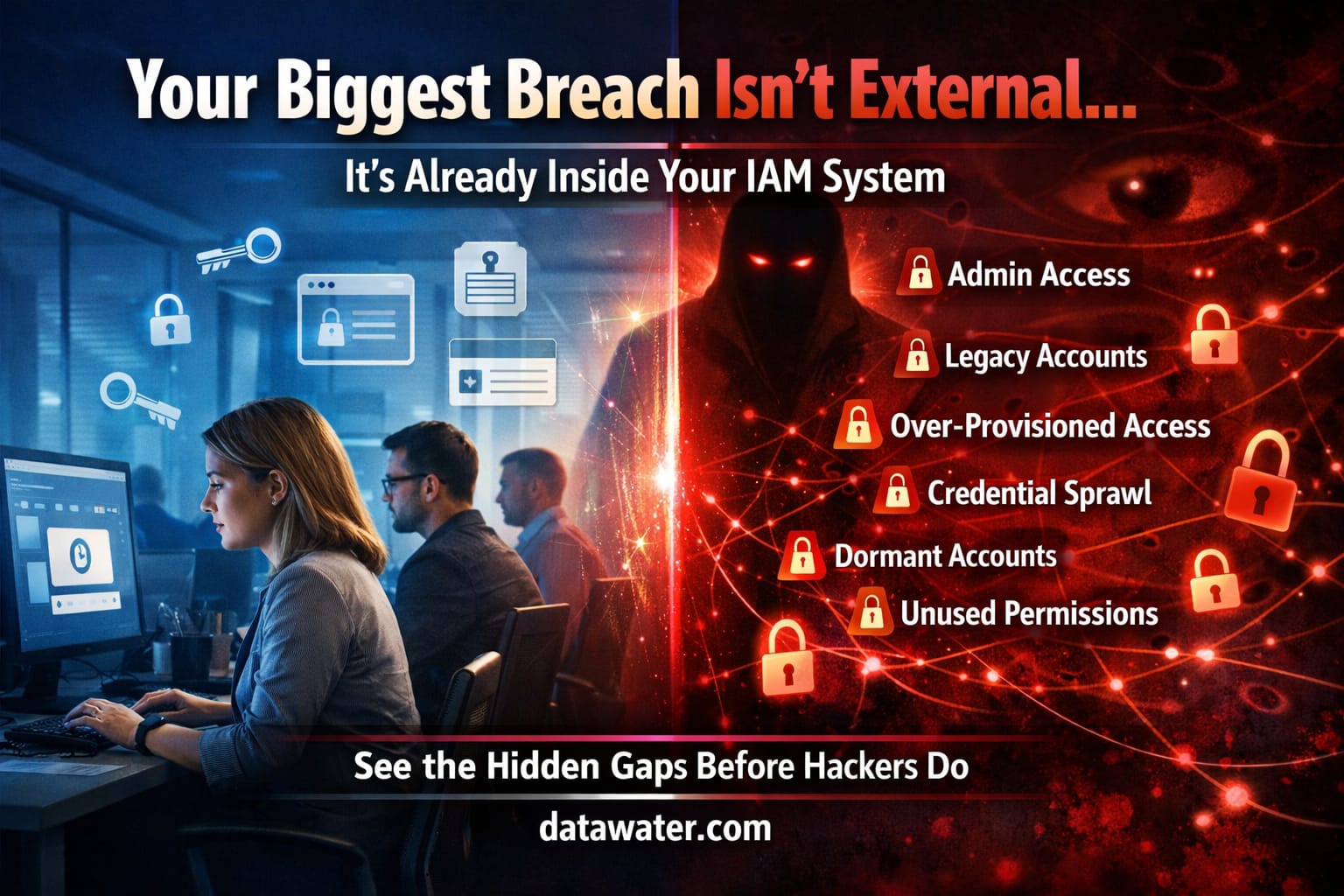

3. No AI-Specific Risk Framework

Most risk programs treat AI as “just another app,” which means:

- No model governance

- No prompt security

- No AI-specific threat modeling

- No continuous monitoring of AI behavior

- No supply chain oversight

- No policies for agent access or tool permissions

This is like running a cloud environment with no IAM policy — a massive blind spot.

4. Security and ML Teams Aren’t Aligned

Security teams talk in:

- CVEs

- MITRE ATT&CK

- Zero trust

- Access control

- Threat intel

ML teams talk in:

- Embeddings

- Vector stores

- Fine-tuning

- RAG

- Tokens

If these groups aren’t collaborating, AI will enter production with critical vulnerabilities unaddressed.

A Four-Step Playbook to Secure the New AI Attack Surface

Here’s how enterprises can build an AI security posture that actually works.

1. Build a Complete AI Asset Inventory

Catalog:

- All LLMs

- Plugins

- Frameworks

- Agents

- Datasets

- Integrations

For each, determine:

- What data can it access?

- What actions can it take?

- What tools can it call?

- What identities does it impersonate?

You cannot secure what you cannot see.

2. Protect the Semantic Layer

For systems exposed to user content, implement:

- Prompt injection defenses

- Content sanitization

- Output filtering

- System-level prompt protections

- Guardrails around allowed actions

- Human-in-the-loop approvals for high-risk tasks

Your “WAF for the semantic layer” should be as strict as your WAF for APIs.

3. Secure the AI Supply Chain

Apply best practices from software security to AI:

- Dependency scanning

- Structured model versioning

- Signed model artifacts

- Plugin permission minimization

- Regular red-teaming of AI workflows

Everything plugged into your AI system is an attack surface.

4. Continuously Monitor AI Behavior

Your SOC should be able to answer:

- What prompts were submitted today?

- What data did the AI touch?

- What tool actions were executed?

- Where did it send outputs?

- What anomalies occurred?

Log:

- Prompts

- Outputs

- Agent actions

- API calls

- Data access events

If you don’t have visibility into what your AI systems do, you cannot defend against what they might do.

The Bottom Line

The AI attack surface has shifted — from endpoints and firewalls to prompts, embeddings, agents, and autonomous systems.

Attackers already understand this. Most enterprises don’t.

The organizations that thrive in the AI era will be those that:

- Treat AI as part of their core attack surface

- Protect the semantic layer with the same rigor as cloud and identity

- Implement governance frameworks built specifically for AI

- Build continuous monitoring of model behavior

- Align security, ML, and engineering into one unified discipline

The future of cybersecurity isn’t just about protecting infrastructure.

It’s about protecting intelligent systems capable of reading, writing, deciding — and acting.

This is the new battlefield.

Enterprises that adapt will survive.

Those that don’t will be caught off guard by an attacker they can no longer outpace.